Fixing duplicate content often involves implementing canonical tags, 301 redirects, noindex meta tags, or disallows in robots.txt. All of which can result in a decrease in indexed URLs. This is one example where the decrease in indexed pages might be a good thing.

Related Article

Lead Pages

Digireload TeamAnother free landing page creation tool is Lead Pages. If you are looking for analytics integrated free landing page creation tool, Leadpages is wo...

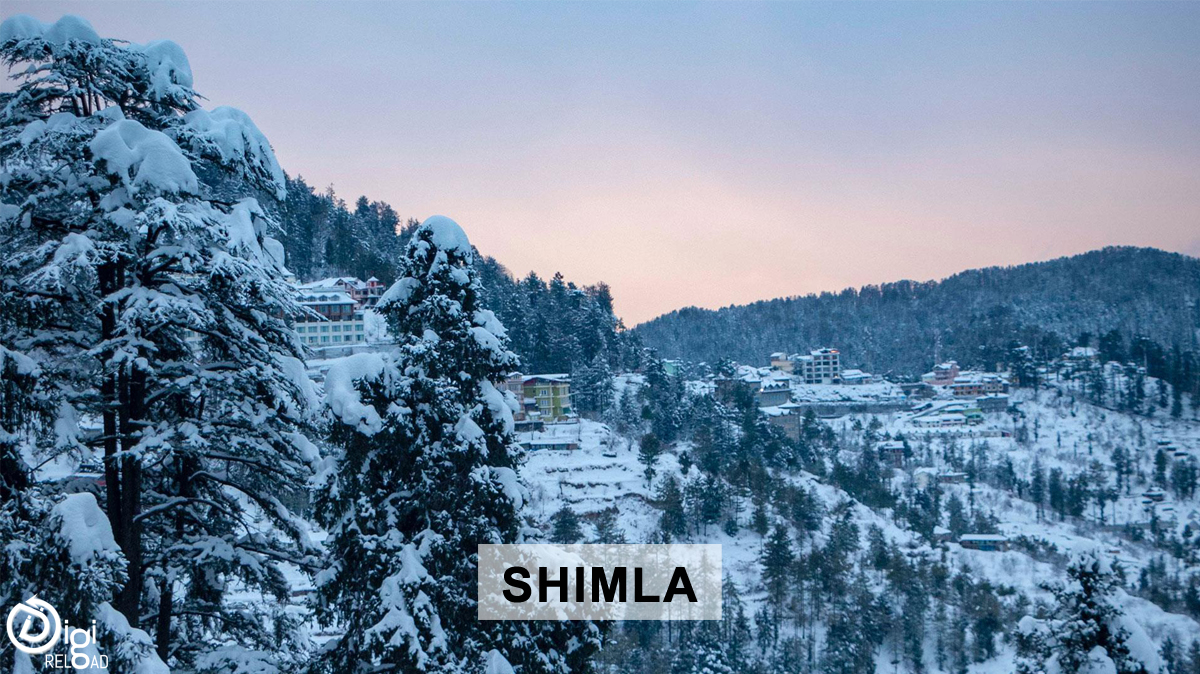

Shimla

Digireload TeamSettled among snow-covered mountains and oak, pine and rhododendron backwoods is the beautiful slope station of Shimla, where pilgrim time structur...

Watch video

Digireload TeamAnother way to earn money online watching videos. But these videos are in fact ads that you usually skipped on YouTube. But on online earning platf...

.png)